Search is changing fast.

People no longer scroll through ten blue links. They ask questions and expect direct answers. Tools like Google AI Overviews, ChatGPT, voice assistants, and other AI answer engines now decide what information gets shown first.

This shift has created a new discipline called Answer Engine Optimization (AEO). Instead of only ranking pages, the goal is to make your content easy for AI systems to understand, extract, and summarize.

At the same time, the way we send structured data to AI models is also evolving. This is where TOON (Token-Oriented Object Notation) comes in.

In this guide, you’ll learn:

- What TOON is and why it exists

- How it compares to JSON

- When TOON actually makes sense

- How TOON can support AEO workflows

- Practical examples, including organization schema-style data

Let’s start with the basics.

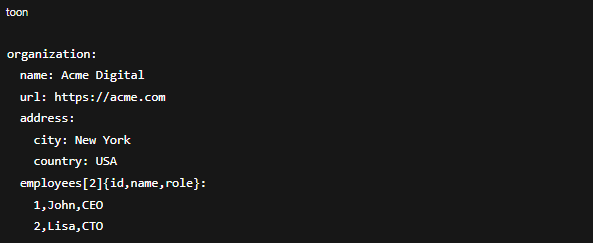

What Is TOON?

TOON stands for Token-Oriented Object Notation.

It is a data format designed specifically for working with large language models (LLMs). The main goal is simple: Reduce wasted tokens while keeping data structured and readable.

JSON is great for software systems. But for AI prompts, it creates a lot of unnecessary overhead. Curly braces, repeated keys, quotation marks, and nested syntax all consume tokens.

TOON removes much of that extra formatting and keeps only what the model needs.

In simple terms

Think of TOON as:

- JSON without clutter

- YAML-style readability

- CSV-style efficiency for tables

- Built for AI prompts, not browsers

Why JSON Is Not Ideal for AI and Answer Engines

JSON was never designed for AI consumption. It was built for machine-to-machine communication.

Here’s where JSON struggles in AI workflows:

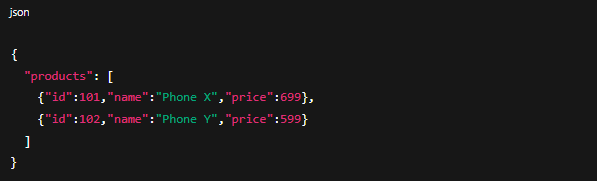

1. Too much repetition

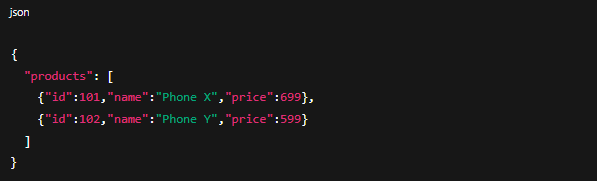

If you send a list of products in JSON, every product repeats the same keys:

When you have hundreds of rows, this repetition adds thousands of unnecessary tokens.

2. Token limits matter

Every AI model has a context window. The more tokens you waste on formatting, the less space you have for:

- Instructions

- Examples

- Supporting data

- Reasoning context

This directly impacts answer quality.

3. Noise for language models

LLMs read patterns. Extra syntax characters like {}, [], and quotes add noise. Fewer formatting tokens help models focus on actual values and meaning.

What Makes TOON Different?

TOON solves these problems by changing how structured data is represented.

Here are the core ideas.

1. Keys are declared once

Instead of repeating keys for every object, TOON defines them once at the top.

Cleaner. Smaller. Easier for AI to parse.

2. Array length is explicit

TOON declares how many rows exist:

This gives the model structural certainty and reduces hallucination risk.

3. Human-readable layout

TOON uses indentation similar to YAML. Even without tools, humans can quickly scan it.

4. Token savings are real

Benchmarks show TOON often reduces token usage by 30% to 60% compared to JSON. That directly lowers API costs and allows more context inside prompts.

Simple Comparison: JSON vs TOON

Example: User profile

Same meaning. Fewer tokens.

Example: Product list

Now imagine this with 1,000 products. That’s where TOON shines.

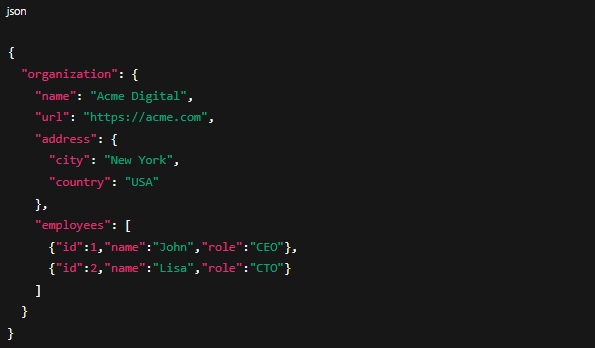

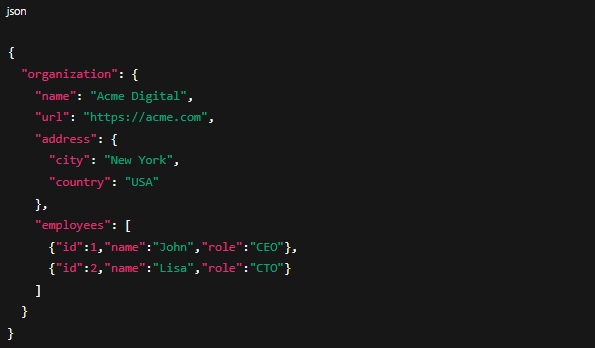

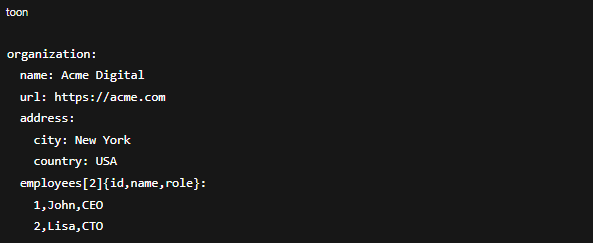

Example: Organization Schema-Style Data

Let’s say you want to provide organization information to an AI model for knowledge graph building, brand summaries, or internal answer engines.

This format is:

- Easier for models to scan

- More compact

- Better suited for prompt-based knowledge extraction

How TOON Connects With Answer Engine Optimization (AEO)

Now let’s connect TOON to AEO.

AEO focuses on making content easy for AI systems to extract answers from. This includes:

- Structured FAQs

- Product attributes

- Knowledge base entries

- Comparison tables

- Business facts

When you use AI tools internally to:

- Generate answer snippets

- Validate schema content

- Build internal search assistants

- Power chatbots

- Prepare AI training data

You often send structured data into models.

This is where TOON adds value.

Example: Feeding product data to an AI assistant

Instead of sending bulky data comparing multiple phone battery life in JSON,

Now the AI can answer:

“Which phone has the best battery life?”

With less token cost and clearer structure.

Why this matters for AEO teams

Better prompts mean:

- More accurate AI-generated answers

- Less hallucination

- More context per request

- Lower infrastructure cost

- Better internal content validation

That directly supports high-quality answer engine visibility.

As part of this shift, many teams are now adopting AI visibility analytics to measure how often their brand, products, and content appear inside AI-generated answers, summaries, and knowledge responses. While TOON does not directly influence rankings, improving how structured website data is processed by AI systems can support cleaner AI outputs that feed into these emerging visibility metrics.

Important Clarification: TOON vs JSON-LD

TOON does not replace JSON-LD on your website.

Search engines still expect schema markup in JSON-LD format.

Use:

- JSON-LD for publishing structured data on web pages

- TOON for AI workflows, prompt engineering, internal tools, and data pipelines

They solve different problems.

When You Should Use TOON

TOON works best when:

- You send large uniform datasets to LLMs

- You work with tables or catalogs

- You build AI-powered search or assistants

- You optimize prompt efficiency

- You generate structured answers at scale

When You Should Avoid TOON

- Stick with JSON when:

- Data is deeply nested and irregular

- Structures change frequently

- Human developers need strict validation tooling

- Browser compatibility is required

- For flat tables, CSV may even outperform TOON.

How to Start Using TOON

TOON is NOT implemented on your website the way schema, HTML, or JSON-LD is.

It is a more convenient way for an AI system to convert bulky JSON data into a Token Oriented Format that becomes effective to read and understand.

TOON is NOT implemented on your website the way schema markup, HTML structure, or JSON-LD are.

From an SEO perspective, TOON does not replace any crawl-layer optimizations. Search engines and AI-powered crawlers still rely on traditional on-page signals such as semantic HTML, structured data markup, internal linking, and content structure.

TOON is used at the data processing layer, after website content has already been indexed or extracted.

In practical terms, TOON becomes relevant when structured website data is exported for AI-driven content workflows. This includes scenarios such as preparing FAQ datasets, product catalogs, service information, or knowledge base content that is later used by AI tools for summarization, validation, or answer generation.

Instead of passing large JSON files into these systems, TOON allows the same structured information to be represented in a more compact and AI-efficient format. This helps reduce processing overhead while preserving data structure.

Final Thoughts

TOON is not a magic replacement for JSON.

But it solves a real problem in AI workflows: wasted tokens and inefficient data formatting.

For teams working on:

- AEO strategies

- AI search experiences

- Knowledge automation

- Prompt optimization

- Internal answer engines

TOON offers a practical advantage.

When combined with strong AEO foundations, such as clear content structure, schema markup, and question-based formatting, TOON can help you move faster and scale smarter in the AI-driven search era.

Article Summary

Article Summary